Are we transforming machines into a religious context or magical context and anthropomorphizing them? Having industry set the rulers is like having the fox guard the hen-house. This is an excellent repost originally found here. This article was written for a military audience.

___________________________________________________________

Two hundred years of massive collateral impacts by technology have brought to the forefront of society’s consciousness the idea that some sort of rules for man-machine interaction are necessary, similar to the rules in place for gun safety, nuclear power, and biological agents. But where their physical effects are clear to see, the power of computing is veiled in virtuality and anthropomorphization. It appears harmless, if not familiar, and it often has a virtuous appearance.

Computing originated in the punched cards of Jacquard looms early in the 19th century. Today it carries the promise of a cloud of electrons from which we make our Emperor’s New Clothes. As far back as 1842, the brilliant mathematician Ada Augusta, Countess of Lovelace (1815-1852), foresaw the potential of computers. A protégé and associate of Charles Babbage (1791-1871), conceptual originator of the programmable digital computer, she realized the “almost incalculable” ultimate potential of such difference engines. She also recognized that, as in all extensions of human power or knowledge, “collateral influences” occur.1

AI presents us with such “collateral influences.”2 The question is not whether machine systems can mimic human abilities and nature, but when. Will the world become dependent on ungoverned algorithms?3 Should there be limits to mankind’s connection to machines? As concerns mount, well-meaning politicians, government officials, and some in the field are trying to forge ethical guidelines to address the collateral challenges of data use, robotics, and AI.4

A Hippocratic Oath of AI?

Asimov’s Three Laws of Robotics are merely a literary ploy to infuse his storylines.5 In the real world, Apple, Amazon, Facebook, Google, DeepMind, IBM, and Microsoft, founded www.partnershiponai.org6 to ensure “… the safety and trustworthiness of AI technologies, the fairness and transparency of systems.” Data scientists from tech companies, governments, and nonprofits gathered to draft a voluntary digital charter for their profession.7 Oren Etzioni, CEO of the Allen Institute for AI and a professor at the University of Washington’s Computer Science Department, proposed a Hippocratic Oath for AI.

But such codes are composed of hard-to-enforce terms and vague goals, such as using AI “responsibly and ethically, with the aim of reducing bias and discrimination.” They pay lip service to privacy and human priority over machines. They appear to sugarcoat a culture which passes the buck to the lowliest Soldier.8

We know that good intentions are inadequate when enforcing confidentiality. Well-meant but unenforceable ideas don’t meet business standards. It is unlikely that techies and their bosses, caught up in the magic of coding, will shepherd society through the challenges of the petabyte AI world.9 Vague principles, underwriting a non-binding code, cannot counter the cynical drive for profit.10

Indeed, in an area that lacks authorities or legislation to enforce rules, the Association for Computing Machinery (ACM) is itself backpedaling from its own Code of Ethics and Professional Conduct. Their document weakly defines notions of “public good” and “prioritizing the least advantaged.”11 Microsoft’s President Brad Smith admits that his company wouldn’t expect customers of its services to meet even these standards.

In the wake of the Cambridge Analytica scandal, it is clear that coders are not morally superior to other people and that voluntary, unenforceable Codes and Oaths are inadequate.12 Programming and algorithms clearly reflect ethical, philosophical, and moral positions.13 It is false to assume that the so-called “openness” trait of programmers reflects a broad mindfulness. There is nothing heroic about “disruption for disruption’s sake” or hiding behind “black box computing.”14 The future cannot be left up to an adolescent-centric culture in an economic system that rests on greed.15 The society that adopts “Electronic personhood” deserves it.

In the wake of the Cambridge Analytica scandal, it is clear that coders are not morally superior to other people and that voluntary, unenforceable Codes and Oaths are inadequate.12 Programming and algorithms clearly reflect ethical, philosophical, and moral positions.13 It is false to assume that the so-called “openness” trait of programmers reflects a broad mindfulness. There is nothing heroic about “disruption for disruption’s sake” or hiding behind “black box computing.”14 The future cannot be left up to an adolescent-centric culture in an economic system that rests on greed.15 The society that adopts “Electronic personhood” deserves it.

Machines are Machines, People are People

After 200 years of the technology tail wagging the humanity dog, it is apparent now that we are replaying history – and don’t know it. Most human cultures have been intensively engaged with technology since before the Iron Age 3,000 years ago. We have been keenly aware of technology’s collateral effects mostly since the Industrial Revolution, but have not yet created general rules for how we want machines to impact individuals and society. The blurring of reality and virtuality that AI brings to the table might prompt us to do so.

After 200 years of the technology tail wagging the humanity dog, it is apparent now that we are replaying history – and don’t know it. Most human cultures have been intensively engaged with technology since before the Iron Age 3,000 years ago. We have been keenly aware of technology’s collateral effects mostly since the Industrial Revolution, but have not yet created general rules for how we want machines to impact individuals and society. The blurring of reality and virtuality that AI brings to the table might prompt us to do so.

Distinctions between the real and the virtual must be maintained if the behavior of the most sophisticated computation machines and robots is captured by legal systems. Nothing in the virtual world should be considered real any more than we believe that the hallucinations of a drunk or drugged person are real.

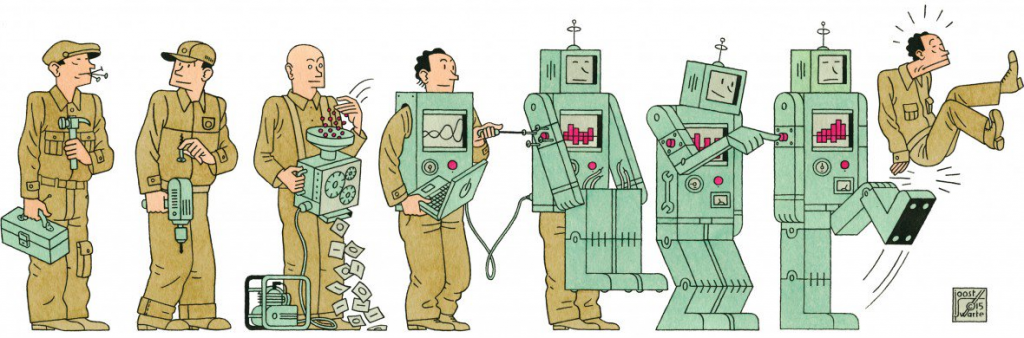

The simplest way to maintain the distinction is remembering that the real IS, and the virtual ISN’T, and that virtual mimesis is produced by machines. Lovelace reminded us that machines are just machines. While in a dark, distant future, giving machines personhood might lead to the collapse of humanity, Harari’s Homo Deus warns us that AI, robotics, and automation are quickly bringing the economic value of humans to zero.16

From the start of civilization, tools and machines have been used to reduce human drudge labor and increase production efficiency. But while tools and machines obviate physical aspects of human work in the context of the production of goods or processing information, they in no way affect the truth of humans as sentient and emotional living beings, nor the value of transactions among them.

The man-machine line is further blurred by our anthropomorphizing machinery, computing, and programming. We speak of machines in terms of human traits, and make programming analogous to human behavior. But there is nothing amusing about GIGO experiments like MIT’s psychotic bot Norman, or Microsoft’s fascist Tay.17 Technologists falling into the trap of considering that AI systems can make decisions, are analogous to children, playing with dolls, marveling that “their dolly is speaking.”

Machines don’t make decisions. Humans do. They may accept suggestions made by machines and when they do, they are responsible for the decisions made. People are and must be held accountable, especially those hiding behind machines. The holocaust taught us that one can never say, “I was just following orders.”

Nothing less than enforceable operational rules is required for any technical activity, including programming. It is especially important for tech companies, since evidence suggests that they take ethical questions to heart only under direct threats to their balance sheets.18

When virtuality offers experiences that humans perceive as real, the outcomes are the responsibility of the creators and distributors, no less than tobacco companies selling cigarettes, or pharmaceutical companies and cartels selling addictive drugs. Individuals do not have the right to risk the well-being of others to satisfy their need for complying with clichés such as “innovation,” and “disruption.”

When virtuality offers experiences that humans perceive as real, the outcomes are the responsibility of the creators and distributors, no less than tobacco companies selling cigarettes, or pharmaceutical companies and cartels selling addictive drugs. Individuals do not have the right to risk the well-being of others to satisfy their need for complying with clichés such as “innovation,” and “disruption.”

Nuclear, chemical, biological, gun, aviation, machine, and automobile safety rules do not rely on human nature. They are based on technical rules and procedures. They are enforceable and moral responsibility is typically carried by the hierarchies of their organizations.19

As we master artificial intelligence, human intelligence must take charge.20 The highest values known to mankind remains human life and the qualities and quantities necessary for the best individual life experience.21 For the transactions and transformations in which technology assists, we need simple operational rules to regulate the actions and manners of individuals. Moving the focus to human interactions empowers individuals and society.

Man-Machine Rules

Man-Machine rules should address any tool or machine ever made or to be made. They would be equally applicable to any technology of any period, from the first flaked stone, to the ultimate predictive “emotion machines.” They would be adjudicated by common law.22

1. All material transformations and human transactions are to be conducted by humans.

1. All material transformations and human transactions are to be conducted by humans.

2. Humans may directly employ hand/desktop/workstation devices in the above.

3. At all times, an individual human is responsible for the activity of any machine or program.

4. Responsibility for errors, omissions, negligence, mischief, or criminal-like activity is shared by every person in the organizational hierarchical chain, from the lowliest coder or operator, to the CEO of the organization, and its last shareholder.

5. Any person can shut off any machine at any time.

6. All computing is visible to anyone [No Black Box].

7. Personal Data are things. They belong to the individual who owns them, and any use of them by a third-party requires permission and compensation.

8. Technology must age before common use, until an Appropriate Technology is selected.

9. Disputes must be adjudicated according to Common Law.

Machines are here to help and advise humans, not replace them, and humans may exhibit a spectrum of responses to them. Some may ignore a robot’s advice and put others at risk. Some may follow recommendations to the point of becoming a zombie. But either way, Man-Machine Rules are based on and meant to support free, individual human choices.

Machines are here to help and advise humans, not replace them, and humans may exhibit a spectrum of responses to them. Some may ignore a robot’s advice and put others at risk. Some may follow recommendations to the point of becoming a zombie. But either way, Man-Machine Rules are based on and meant to support free, individual human choices.

Man-Machine Rules can help organize dialog around questions such as how to secure personal data. Do we need hardcopy and analog formats? How ethical are chips embedded in people and in their belongings? What degrees and controls are contemplatable for personal freedoms and personal risk? Will consumer rights and government organizations audit algorithms?23 Would equipment sabbaticals be enacted for societal and economic balances?

The idea that we can fix the tech world through a voluntary ethical code emergent from itself, paradoxically expects that the people who created the problems will fix them.24 It is not whether the focus should shift to human interactions that leaves more humans in touch with their destiny. The question is at what cost? If not now, when? If not by us, by whom?

___________________________________________________________

Author Information

If you consider this article informative please consider becoming a Patron to support my work.

Going where angels fear to tread...

A special thanks to my friend and Editor, Beate.

Celeste has worked as a contractor for Homeland Security and FEMA. Her training and activation's include the infamous day of 911, flood and earthquake operations, mass casualty exercises, and numerous other operations. Celeste is FEMA certified and has completed the Professional Development Emergency Management Series.

- Train-the-Trainer

- Incident Command

- Integrated EM: Preparedness, Response, Recovery, Mitigation

- Emergency Plan Design including all Emergency Support Functions

- Principles of Emergency Management

- Developing Volunteer Resources

- Emergency Planning and Development

- Leadership and Influence, Decision Making in Crisis

- Exercise Design and Evaluation

- Public Assistance Applications

- Emergency Operations Interface

- Public Information Officer

- Flood Fight Operations

- Domestic Preparedness for Weapons of Mass Destruction

- Incident Command (ICS-NIMS)

- Multi-Hazards for Schools

- Rapid Evaluation of Structures-Earthquakes

- Weather Spotter for National Weather Service

- Logistics, Operations, Communications

- Community Emergency Response Team Leader

- Behavior Recognition

And more….

Celeste grew up in a military & governmental home with her father working for the Naval Warfare Center, and later as Assistant Director for Public Lands and Natural Resources, in both Washington State and California.

Celeste also has training and expertise in small agricultural lobbying, Integrative/Functional Medicine, asymmetrical and symmetrical warfare, and Organic Farming.

I am inviting you to become a Shepherds Heart Patron and Partner.

My passions are:

- A life of faith (emunah)

- Real News

- Healthy Living

Please consider supporting the products that I make and endorse for a healthy life just for you! Or, for as little as $1 a month, you can support the work that God has called me to do while caring for the widow. This is your opportunity to get to know me better, stay in touch, and show your support. More about Celeste

We live in a day and age that it is critical to be:

- Spiritually prepared,

- Purity in food and water can

- Secure protection against EMF and RF

- Deters radiation

- Supports Christian families

I use and endorse Helix Life products because they oxygenate the body, deters radiation, creates a healthy oasis bubble around your body. No outside power necessary, easily cleanable.

The Pendant is for personal protection $229-

The Tower will protect your home and structure and is also portable. $1895-

The Array will protect your home, land, or farm $10,200

Steve Quayle Bundles for deep savings and some of my handcrafted products.

Montana Plus-Pendant, Tower and some of my soap products $1849

Frazzled Mom Bundle-Pendant, Tower, Soaps and Cream $1589

Montana Bundle-Pendant and soap $258

HelixLife.com offers 0 % financing for 6 months. Telephone and Chat support for questions. They also have scientific studies and resources on why you need EMF protection. You can view testimonials from satisfied customers.

Special: Try the pendant for 10 days for $99! You can purchase the Pendant or return it, hassle free!